The Unbearable Anthropomorphization of a Parrot's Being

The purpose of this text is to explain how Large Language Models (LLMs) work and to propose some suggestions to help non-technical people understand this technology. The second objective is to entertain, as this facilitates learning.

How a computer understands text

This has had mixed results, as anyone who has tried to use automatic translators has painfully experienced. A computer is really just a big calculator. Translation consists of reading and understanding the original text and writing it anew in the target language, and the quality of the result will be directly proportional to the degree of understanding of the original.

The problem, therefore, was to find a digital representation of words that would reflect their meaning. The winner in this category turned out to be the vector, which is one row of Excel, with numbers in each cell. These numbers somehow encode the intensity of certain features of the word. Such a feature is, for example, a place in the continuum of masculinity and femininity. The word beauty will probably be more feminine, and the word strength masculine. A vector is like the DNA of a word, encoding a whole lot of associations associated with it. This DNA is not directly designed by humans, but is created by itself in the process of training a neural network, which we will talk about later.

Okay, but what about the word castle, for example? It could be a grand medieval fortress, a children's play structure in the backyard, or even a strong strategic position in a board game. Three very different ideas. So, what will happen in our Excel row? Well, for years nothing happened, and that's why automatic translators gave us more reasons to scoff than high-quality translations.

And that's where the scientists came in, proposing the attention mechanism. Attention is a form of vector transformation that allows us to update it based on other vectors adjacent to it; in other words, we know words by the company they keep. So if we have the word drawbridge and castle in a sentence, it will be clear both to us and to the calculator on steroids that this castle would have walls and perhaps a moat.

The attention mechanism allows LLMs to recalibrate word weights based on their context, so that they can focus on the most important parts of the text. This makes LLMs understand content better and generate more meaningful responses.

That's how our digital intelligence got a little less artificial.

What are GPT, LLM and neural network

What does all this GPT in Chat GPT mean? It is an acronym for Generative Pre-trained Transformer.

Transformer is a neural network architecture whose essence is the transformation of something into something else. The original goal of this architecture was to create a better automatic translator. The transformer architecture also handles other forms of transformation well. It can transform long text into short text, text into image, text into music, etc. Some say it also turns programmers into unemployed people.

Generative suggests that it is a technology that creates something. This is in contrast to the first applications of neural networks, which were usually passive. The network can recognize a pattern, e.g. a fingerprint and unlock our phone based on it. Here the network generates something for us, hence it is generative.

Pretrained is in turn a reference to a rather specific learning procedure, which we will discuss later.

LLM (Large Language Model) is a large language model. There is nothing unusual here. Although people in the industry like to come up with overly complicated or pretentious names, this time what you see is what you get.

A neural network, on the other hand, is a computational model. You can think of it as an enormous mathematical function. This immense size includes something like a large Excel, with many sheets, and each of them contains a large table with numbers (these are the weights in the sense of the weighted sum [2], which is calculated there). These numbers represent many small sliders, influencing the result in a certain way [4]. If our network is to recognize a fingerprint, we feed it an image (bitmap) of the finger, and we expect a decision on the output. We immodestly call such a run inference. The calibration of these switches so that they recognize the right fingers well and reject the wrong ones, we call learning.

In the case of LLMs, the neural network receives our query with context at the input, and at the output returns a vector (one row of Excel) with the probabilities of the next text fragment. We call such a fragment a token. A token can be a word, a fragment of it, or some other wonder (for simplicity we will continue to pretend that LLMs operate on words and not tokens). In any case, the important thing is that one pass of the neural network (one inference) gives us only one word. So, in order to get a longer text out of it, we have to wait a bit. To make the wait more pleasant, the creators of chats based on LLMs write the text to us on the screen in real time.

Primary and secondary socialization of LLMs

We send a newborn LLM to two schools - first to primary school (pre-training), where we will teach it basic facts about the world, as well as reading and writing. Then to vocational school (fine tuning), where we will teach it the profession of a helpful assistant. In primary school, something like primary socialization will take place, in which our LLM will transform into a clever token generator that complies with elementary social and grammatical norms. In vocational school, on the other hand, it will undergo secondary socialization for its final professional role.

Primary education is a bit like making scrambled eggs for breakfast. First we need the main ingredient, which will be the body of text for learning. This corpus must be of good quality, otherwise the scrambled eggs will be indigestible. At the same time, it should be large enough to satisfy our hunger for knowledge. Fortunately, we have the world wide web, where there is plenty of text. It is true that obtaining texts from the web may hurt the feelings of their authors and copyright lawyers, but as the great innovators of Silicon Valley say, to make scrambled eggs you have to break a few eggs.

These texts should naturally be subjected to strict selection. We don't want our pupil to be saturated with knowledge about women from incel forums, to learn medicine from anti-vaxxers, astronomy from flat-earthers, or ecumenism from jihadists. We also don't want our LLM to recite advertising jingles to us later. So such a carefully purified decoction of the Internet will be our learning corpus.

The learning itself consists of training a neural network to effectively predict the probability of the next word appearing in a text sequence. The algorithm roughly looks like this: we randomly select a sequence (a sentence or a few sentences) in the corpus, we give the network this sequence minus the last word (token) as input, and we see how the network assessed the probability of the last word occurring. The higher, the better - this is our objective function (at the beginning, the network parameters are set to random values, so the result will also be random). Then we do the so-called backpropagation, i.e., for each of the network parameters, we calculate which way to move it a little so that the result at the end is closer to expectations. Then we draw another sequence and so on.

Neural network training is simply a process of optimizing the objective function. If the function calculates the size of the error for us, we are looking for its minimum. This is our digital equivalent of frying scrambled eggs. The basic analogies hold up strongly. If we fry for too short a time, our LLM will be undertrained and may later vote for populists or hallucinate about electric sheep. If we fry too long, we will go bankrupt due to the electricity bill. The phenomenon of overfitting may also occur. Our network will learn the source texts by heart and instead of generalizing the given examples nicely for us, it will mindlessly recite them, including typos. This can have various unpleasant consequences. The aforementioned copyright lawyers are just waiting for such blunders.

Primary school for LLMs takes quite a long time, because both this body of text and our Excel with parameters really deserve the first "L" in LLM. This time is measured in months and the project budget in millions of USD. Don't try this at home.

An LLM after primary school can "only" spit out the next words to some sequence. So if we give it the first sentence of some Wikipedia article as input, it will add the rest. Incidentally, probably word for word, because copying Wikipedia into corpora of this type is standard practice. So our LLM can already write, it also has a memory saturated with source texts, which give it some elementary knowledge of the world. At this stage, our model is only a generator of the rest of the text, so it would be quite difficult to use it for any practical applications.

That's why further education in vocational school (fine tuning) is necessary. The goal of this is to transform our token generator into an assistant that answers questions. Here we will show our LLM scripts prepared by human experts. A script is a question and a very high quality answer. The collection of these scripts is a closely guarded secret of companies producing LLMs. The methodologies for their production are also dynamically evolving. This is where our pupil gains refinement, learns the culture of the word, respect for facts, and social conventions. To a degree, of course, proportional to their occurrence in the scripts shown to him.

The algorithm for teaching a neural network in vocational school is analogous, except that this time we teach the network to answer questions (i.e., the input to the network is a question, also called a prompt), and we evaluate the quality of the answer by comparing it with an expert script. This stage is much cheaper and faster, because there are incomparably fewer scripts than text in the primary school corpus, and also our network no longer has completely random weights. So in our breakfast analogy, this can be compared to sprinkling chives on scrambled eggs, making a sandwich and coffee.

My scrambled eggs are better than yours

The quality of LLM's knowledge is burdened with a number of imperfections, which are a natural consequence of the process of its construction. All the misrepresentations in the corpus will be reflected in the neural network of our model. Thus, the better a given language is represented in publicly available Internet texts, the better the LLM will learn it. That's why all LLMs will be fluent in English, but I wouldn't expect great support for the Kashubian language. Topics widely described on the web, such as IT knowledge, will be acquired very decently. However, specialized topics, available rather in books behind paywalls, or even only on paper, will remain there.

Another problem is a certain democracy of the learning process. If there are ten average texts with outdated knowledge for one great text with current and accurate knowledge, the former will win. For this reason, general-purpose LLMs will rather repeat the most common opinions on the public Internet. Unless, of course, these are controversial enough opinions to be censored either in the process of selecting data for the corpus or professional scripts. LLM chats also have built-in additional defense mechanisms, for example, before a question goes to the actual "engine", it must first pass through a censorship filter, which checks whether the question is sufficiently ethical (we do not want to help people build bombs or poisons). Sometimes we also have a post hoc filter, i.e., censorship of the response of the actual model. A certain Chinese model became famous for self-censoring after generating a factually correct but politically incorrect answer about Tiananmen Square.

Human knowledge is by no means some uncontroversial consensus. Quite the opposite. The infosphere is an area of political, economic, cultural, and aesthetic wars. Many companies would like to influence the answers of popular LLMs as to which car or washing powder is best to buy. State governments have differing opinions on the political status of Crimea or Taiwan. When preparing a corpus of text for learning, we must make a number of decisions about censoring certain points of view and promoting others. LLM is therefore a projection of the values of the cultural and political circle from which its producer originates, as well as the spirit of the time in which the corpus was created.

Show me your scrambled eggs, and I'll tell you who you are.

Do stochastic parrots hallucinate about electric sheep?

What can LLMs actually do? As we have already established, the heart of an LLM is a generator of the probability distribution of the next token in a text sequence. This is surrounded by a certain amount of logic, which provides us with a relatively safe service of a helpful assistant ready to carry out commands and answer questions. In fact, the basic competence of LLM is paraphrasing text. It can be text learned in primary or vocational school. It can be obtained from an internet search engine. It can be provided by the user. No more and no less.

This is where the metaphor of the stochastic parrot [6] comes from. LLM does not produce new knowledge, it only parrots (paraphrases) existing knowledge. In addition, it does it stochastically, so it is a kind of casino where answers are drawn in a large roulette wheel with tokens. It is not without reason that LLM producers warn against asking their products for medical, legal, or any other advice, where a wrong answer could result in bloodthirsty copyright lawyers being joined by lawyers of other specialties.

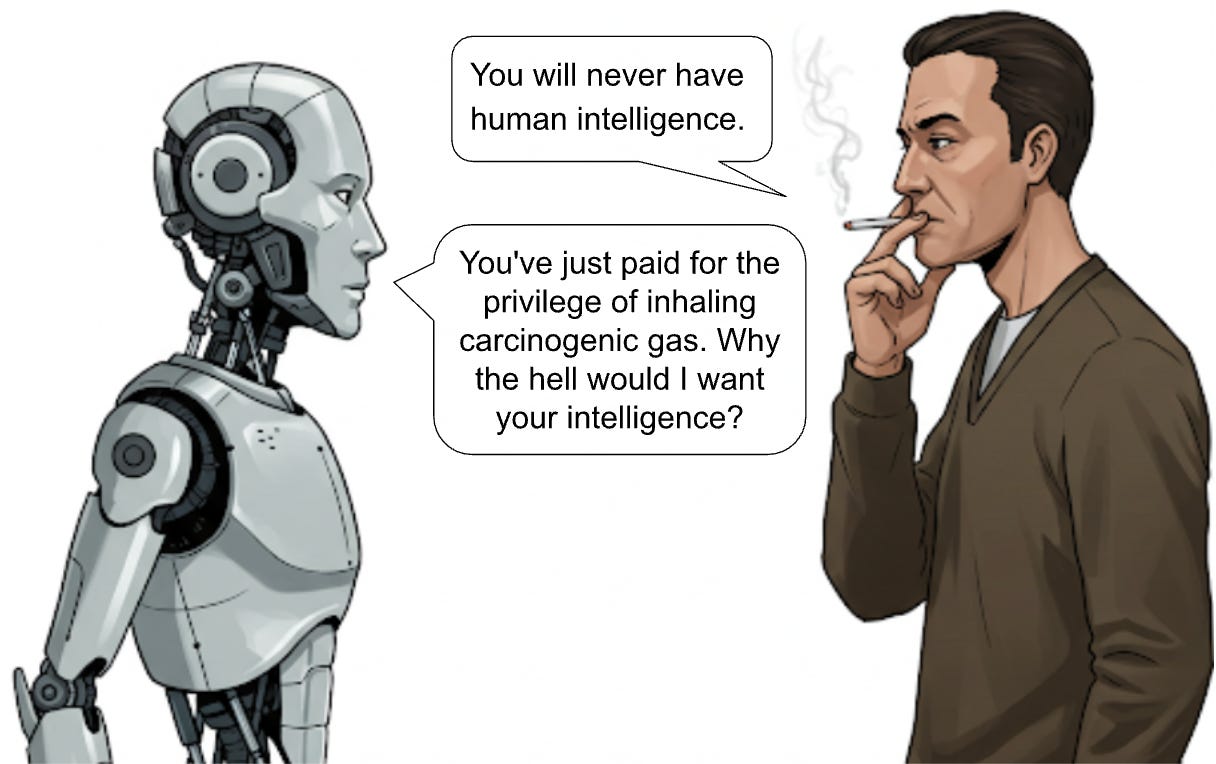

You can look at it as a glass half empty or half full. Technological skeptics will say that this is the ultimate proof of the indolence of LLMs and their inevitable inferiority to our human, shocking intellectual potential. Enthusiasts, on the other hand, will say, so what? The Internet is "only" a network of local computer networks, and it was enough to revolutionize the world. A cell phone is "only" a pocket PC with a radio. Finally, does humanity really make good use of all the knowledge already available, so that the inability of LLMs to generate new knowledge should be any particular limitation?

Paraphrasing text is a basic competence that is expected of us in the education process. Almost all exams from elementary school to professional certificates consist of either answering a series of questions about a given text, writing an essay on a topic described in another text, or solving a mathematical problem. LLMs do a great job with the first two, and for the third there is other software (e.g. Wolfram Alpha). This tells us something not only about the development of computer science, but perhaps even more about our education systems.

What is some part of the work of a translator, journalist, teacher, hotline employee, loan advisor, salesman and many, many others, if not paraphrasing text?

To some extent, we are all stochastic parrots.

The Unbearable Anthropomorphization of a Parrot's Being

People tend to project their humanity onto their surroundings. We say that the computer thinks when it doesn't respond for a moment. We say that the computer doesn't like us when something doesn't work out for us. We smile at graphical interfaces.

When we hear a given word, for example child, it evokes in us a number of emotional reactions, associations with our own childhood, the experience of being a parent, or familiar family stories from literature and television. It reminds us of emotions, smells, touches, sounds. We have many sources of knowledge that we can use to understand a situation and be able to relate to it.

LLM has not experienced life. He did not father a son, did not plant a tree, did not build a house. He is like an extreme autistic with an almost absolute memory, who has read the entire web and can quote it from memory. For LLM, a word is a vector in a semantic space, created in the learning process. It does not evoke feelings, it does not activate memories, because LLM does not have them. LLM has only textual memory, shining with the reflected light of the wisdom of the people who wrote the source texts. These texts may contain descriptions of emotions and sensory experiences, and these descriptions may be quoted and thus create the impression of understanding at a deeper level than it really is.

I don't like the term artificial intelligence and I deliberately avoided it in this text. It is a marketing term that misleads non-technical people, reinforcing the tendency to anthropomorphize this technology.

A computer is a calculator on steroids. A neural network is a large Excel. LLM is a text paraphrasing machine. Yes, it is a great technology with powerful potential and will undoubtedly drive the next wave of the digital revolution, automating more areas of our lives. However, it is not a philosopher's stone. Let's try to see it as it really is.

Bibliography

To those who are eager for real knowledge, devoid of simplifications, jokes and memes, but properly saturated with mathematics, I recommend reading the following positions.

[1] Turing (1950), Computing Machinery and Intelligence, https://courses.cs.umbc.edu/471/papers/turing.pdf

[2] Rosenblatt (1958), Perceptrons, https://www.ling.upenn.edu/courses/cogs501/Rosenblatt1958.pdf

[3] LeCun, Boser, Denker, Henderson, Howard, Hubbard (1989), Backpropagation Applied to Handwritten Zip Code Recognition, https://ieeexplore.ieee.org/document/6795724

[4] Glorot, Bordes, Bengio (2011), Deep Sparse Rectifier Neural Networks, https://proceedings.mlr.press/v15/glorot11a/glorot11a.pdf

[5] Vaswani, Shazeer, Parmar, Uszkoreit, Jones, Gomez, Kaiser, Polosukhin (2017), Attention Is All You Need, https://arxiv.org/pdf/1706.03762

[6] Bender, Gebru, McMillan-Major, Shmitchell (2021) On the Dangers of Stochastic Parrots: Can Language Models Be Too Big?, https://dl.acm.org/doi/pdf/10.1145/3442188.3445922